Master your AI's memory in minutes

Get value in 60 seconds. Copy-paste these prompts:

recallium→ Loads your full project context"Remember we chose Postgres because..."→ Stores a decision"Scan this codebase, document each component, design, and flows in my memories"→ Stores a series of memories for later"What did we decide about the database?"→ Retrieves it instantly| What You Want | What to Say |

|---|---|

| Load context | recallium |

| Save a decision | "Remember we chose X because Y" |

| Find past work | "Search my memories for auth flow" |

| Search uploaded docs | "Search my documents for the API spec" |

| Resume work | "Where did we leave off?" |

| Find patterns | "What bugs keep recurring?" |

| Think through a problem | "Help me think through X vs Y" |

| Track a follow-up | "Create a task: migrate endpoints" |

| End of day | "Summarize what we did today and save it" |

The 5 things you'll use every day.

You:

"Remember this JWT implementation. Access tokens expire in 15 minutes, refresh tokens in 7 days, stored in HTTP-only cookies."

AI:

✓ Stored with type: code-snippet Files: src/auth/jwt.ts Tags: authentication, jwt, security

You:

"What did we decide about database choice?"

AI:

We chose MongoDB for user preferences because: - Schema flexibility for custom fields - Nested documents for preference hierarchies - Team has more MongoDB experience

You:

"I'm about to edit src/auth/jwt.ts. What should I know?"

AI:

Found 3 relevant memories: 1. JWT Refresh Implementation (2 weeks ago) 2. Bug Fix: Race Condition (1 week ago) 3. Security Enhancement (3 days ago) ⚠️ Token reuse detection is sensitive - test thoroughly if modifying refresh flow.

You:

"Show me everything we've done on authentication"

AI:

Found 8 memories: - 3 implementations (JWT, OAuth2, 2FA) - 2 decisions (JWT over sessions, 15min expiry) - 2 bug fixes (race condition, CORS) - 1 performance improvement (45ms → 8ms)

You:

"Where did we leave off?"

AI:

Last session (2 hours ago): - Implemented rate limiting for login endpoint - Created task: "Add integration tests for auth" - Pending: Review PR for token rotation fix Ready to continue?

Advanced features for deeper insights.

You:

"What patterns have emerged in our API error handling?"

AI:

Analyzing 23 memories across 4 projects... Common Patterns: 1. Centralized Error Middleware (3 projects) 2. Domain-Specific Exceptions (strong pattern) 3. Circuit Breaker for External APIs (emerging) Recommendation: Standardize on patterns 1+2.

You:

"Why do we keep having authentication bugs?"

AI:

Quality Analysis: Root Causes: 1. Race Conditions (3 occurrences) ⚠️ 2. Token Expiry Edge Cases (2 occurrences) 3. CORS Misconfigurations (2 occurrences) Recommendation: Add integration tests for concurrent auth scenarios (40% of bugs).

You:

"Help me think through migrating from REST to GraphQL"

AI:

🤔 Starting structured thinking... Branch 1: Big Bang Migration - Risk: HIGH, Timeline: 3-4 months Branch 2: Gradual Migration (Facade) - Risk: MEDIUM, Timeline: 6-8 months Branch 3: New Features Only - Risk: LOW, Timeline: Ongoing Recommendation: Branch 2 - proves value incrementally, team learns while productive.

You:

"Remind me to add rate limiting. Link to our auth work."

AI:

Task created: "Add rate limiting to login" Linked to: - Memory #1234: JWT implementation - Memory #1245: Auth bug fix Full context available when you return!

One memory layer across all your tools and projects.

Architect researches and documents in Claude Desktop, then switches to Cursor to implement.

You:

"Research best practices for rate limiting APIs. Save your findings to my memories."

Claude:

✓ Stored research on rate limiting: - Token bucket vs sliding window algorithms - Redis-based distributed rate limiting - Recommended: 100 req/min for auth endpoints

You:

"What did we research about rate limiting?"

Cursor:

Found your research from Claude Desktop: - Token bucket recommended for API rate limiting - Use Redis for distributed systems - 100 req/min for auth, 1000 req/min for general Ready to implement based on these findings?

Reuse proven patterns across your codebase.

You:

"In the payment-service project, how did we implement error handling middleware? I want to use the same pattern here."

AI:

Found error handling pattern from payment-service: 1. Centralized ErrorMiddleware class 2. Custom exceptions: ValidationError, AuthError, NotFoundError 3. Each maps to HTTP status + error code 4. Correlation ID logging for tracing Files: src/middleware/errorHandler.ts Want me to adapt this pattern for your current project?

You:

"What authentication patterns work best across all my projects?"

AI:

Analyzing 5 projects... Strongest patterns: 1. JWT + refresh rotation (4 projects, 0 issues) 2. Redis session store (3 projects, stable) 3. RBAC middleware (2 projects, scaling well) Avoid: Cookie-only auth caused CORS issues in 2 projects.

You:

"What did I work on yesterday? I used VS Code in the morning and Cursor in the afternoon."

AI:

Yesterday's work (both IDEs): Morning (VS Code): - Fixed auth middleware bug - Added rate limiting research Afternoon (Cursor): - Implemented rate limiter based on research - Created task: "Add rate limit tests" All context unified regardless of which tool you used.

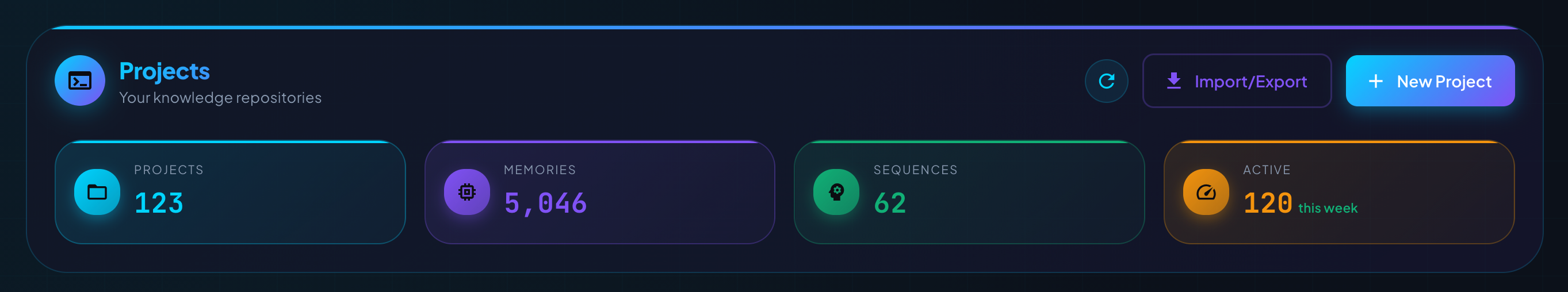

Stay current, protect your memories, and move between machines seamlessly.

Upgrading is simple — just run the start script again:

# Linux/macOS ./start-recallium.sh # Windows start-recallium.bat

The script automatically pulls the latest version and restarts with your existing data intact.

We ship improvements weekly — new memory types, better search, UI enhancements, and integrations with the latest AI tools.

Stay protected with the latest security updates. We patch vulnerabilities as soon as they're discovered.

Each release includes optimizations — faster search, reduced memory usage, and improved embedding performance.

We continuously fix issues reported by the community. Upgrading ensures you have the most stable experience.

Tip: Check the changelog to see what's new in each release.

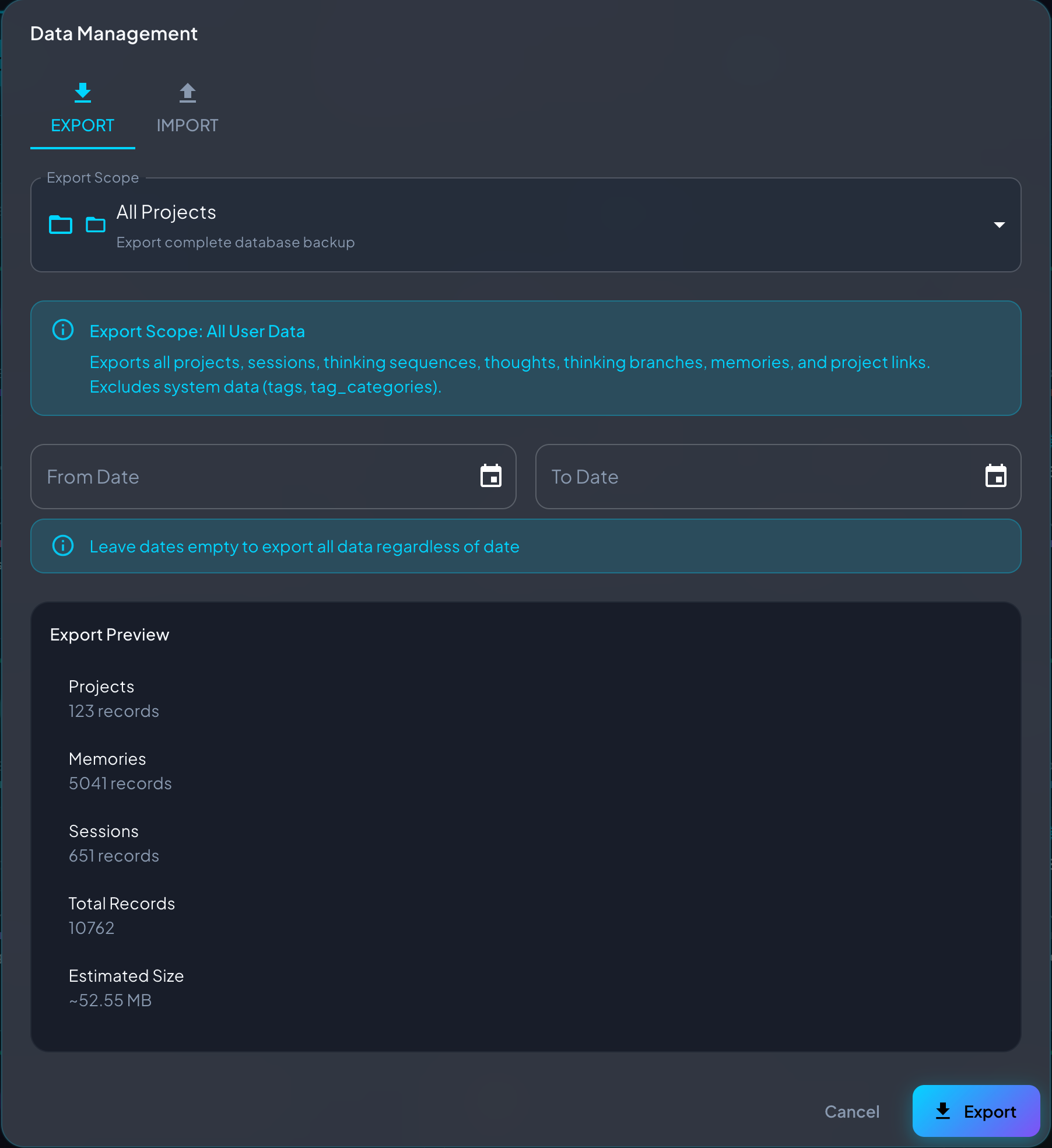

Before updating to a new version, export your data. This ensures you can restore if anything goes wrong.

Recallium exports a complete snapshot of your data:

| Data | Details |

|---|---|

| Memories | All content, tags, types, and metadata |

| Embeddings | Pre-computed vectors (no re-processing needed on import) |

| Documents | Uploaded PDFs, text files, and their chunks |

| Document Files | Original uploaded files preserved in ZIP |

| Projects | Project definitions and settings |

| Sessions | Session history and thinking sequences |

The fastest way to backup — use the script in your install folder:

# Linux/macOS ./download-memories.sh # Windows download-memories.bat # Creates: recallium-export-20260403-143052.zip

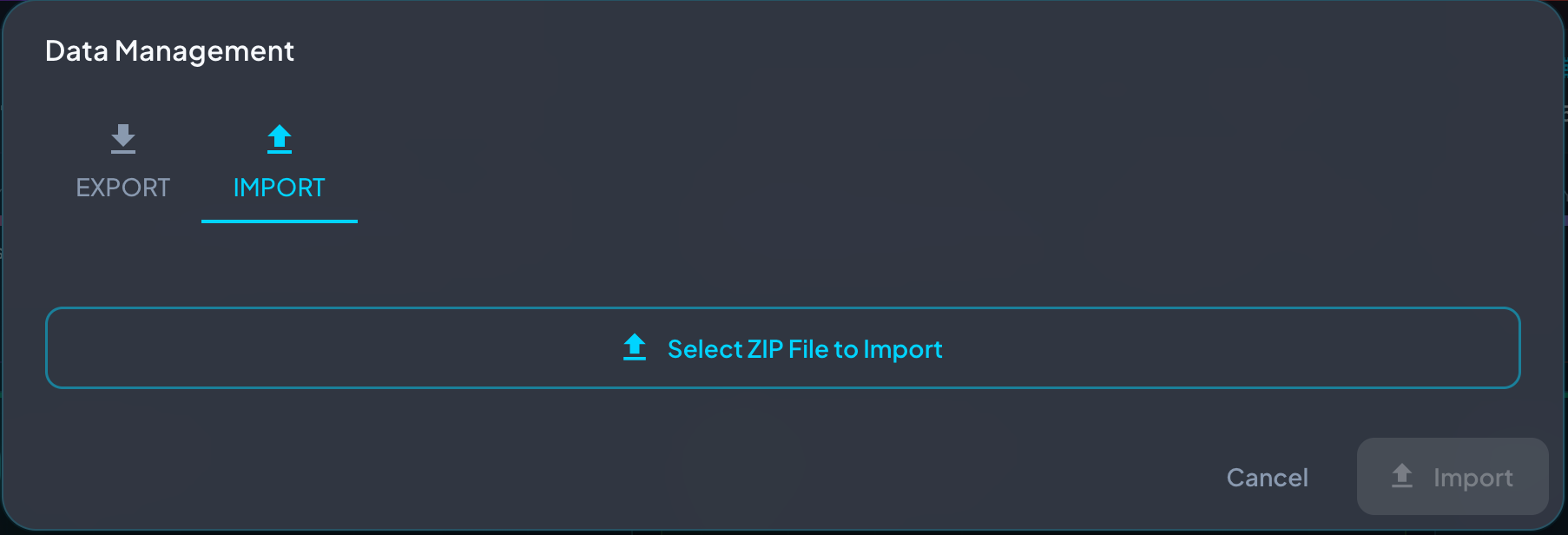

Move your complete memory system to a new computer or server.

./download-memories.sh # Copy the ZIP file to your new machine

# Install Recallium first, then: # Open Data Management → Import tab # Select your ZIP file # Choose "Merge" mode # Click Import

| Mode | Use When |

|---|---|

Merge | Add to existing data (recommended) |

Replace | Clear all data first, then import |

Fresh | Empty database only |

When importing, you can map source projects to different destinations:

Useful for consolidating projects or renaming during migration.

# Export all data curl -o "backup.zip" http://localhost:8001/api/data/export # Export specific project curl -o "project.zip" "http://localhost:8001/api/data/export?project_id=5" # Preview without downloading curl http://localhost:8001/api/data/export/preview

Recallium's value compounds over time. Build these rituals:

recallium"Save what we just did: [describe changes]""Summarize what we accomplished and save it""Where did we leave off?""What context do we have for [filename]?"Your AI will find past implementations, warnings, and decisions you've forgotten.

Don't save after every line. One comprehensive memory beats ten fragments.

Future you needs the reasoning, not just the decision.

Makes memories searchable by file — critical for "before editing" workflow.

If you solved something hard, save it. Your AI can't remember what you don't tell it.

"Save this""Remember this JWT implementation with refresh token rotation"Look for the AI's confirmation: "✓ Stored with type: ..."

"getUserByIdAndValidateToken""Search my memories for user authentication"Semantic search finds related concepts — use natural language.

"Find my work""Find my authentication work in the payment-service project"Include project name and topic for precise results.

"Show me everything""Find recent authentication decisions in src/auth/"Use filters: recent_only, memory_type, file_path.

Start with the 60-second quick win, then build the daily habits. Your AI will get smarter with every session.